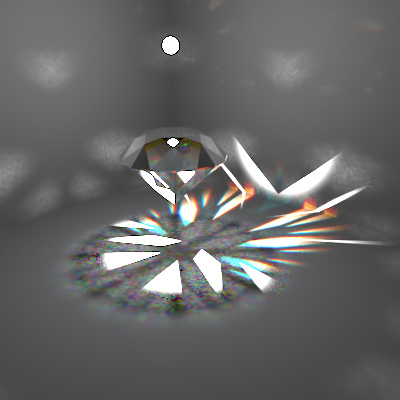

(800x800 pixels, 64 samples/pixel, 24 specular reflections max, 5 million caustic photons, Render time: ~2 days).

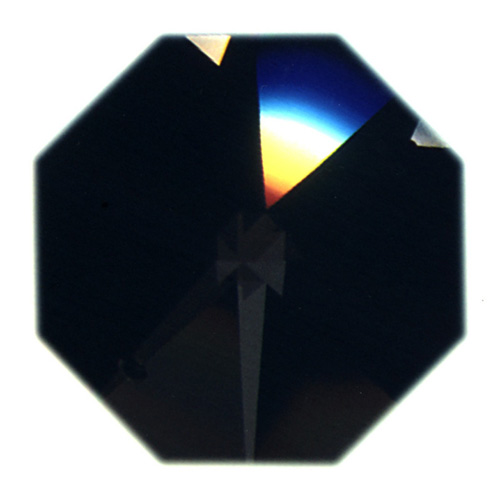

In my proposal, I set out to model some sort of wavelength-dependent surface interaction, as I've always been fascinated with the rainbow-like effects produced by such phenomena. I decided on the gemstone idea for several reasons: gemstones exhibit amazing physical properties not often seen in day-to-day objects; I was inspired by a Siggraph 2004 presentation on gemstones; and I found two papers by Sun et al that discussed the implementation issues of light dispersion in a standard ray tracer. The two images I used as references are displayed below; one shows the amazing dispersion effects in the Century diamond, and the other shows a scene I sought to duplicate.

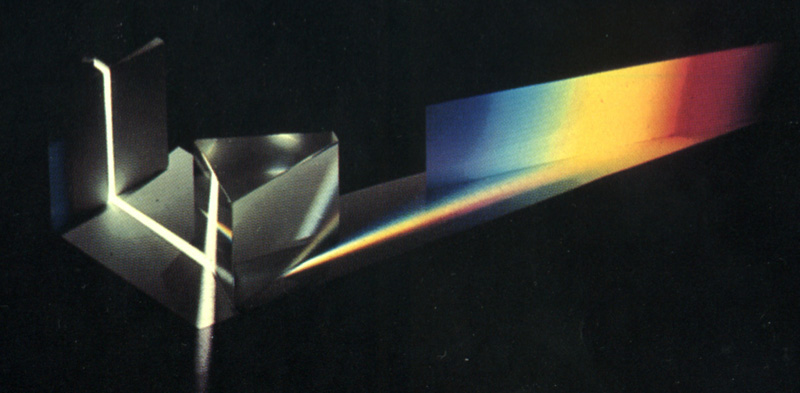

Light dispersion occurs in materials in which the index of refraction is not constant, but rather varies depending on the wavelength of incoming light. Snell's law still holds, but white light splits into monochromatic light, where each wavelength beam has a different index of refraction. The classic example is a sharp white light shot through a crystal prism or through a simple crystal:

Note that, in the crystal, the center of the 'dispersion rainbow' is white rather than green, as light sources are typically area rather than 'delta' so, at the rainbow middle, all wavelengths of light reach the area light. This is characteristic of most real-world dispersion. Refractive index as a function of incident wavelength is given in the graph below for various dispersive materials. This function is typically simplified to two values: n_D, the index of refraction at wavelength 589.3nm, and DISP, the difference in index of refraction between wavelengths 430.8nm and 687.0nm, as displayed below.

There are several issues to resolve when modeling dispersion in a standard ray tracer like PBRT. BTDFs are typically implemented as functions that only take incident angle into account and have a single index of refraction; for dispersion a BTDF also needs to know whether incident light is monochromatic or not, what wavelength(s) it represents, and how to model the refractive function. Rays themselves will also need to have this additional state: whether they are monochromatic and what wavelengths they represent. Finally, I wanted to use the photon map integrator to handle the complex caustic created by gemstones, so this integrator would require dispersion functionality in both photon mapping and actual imaging.

The 'glue' used to handle many of these issues is the WavelengthFilter class I implemented. This is a very simple class that represents a ray's wavelength state. This class implements a series of Spectrum filters which are multiplied by other Spectrums along a ray's path to ensure we only keep a particular wavelength we're interested in. Initially, a ray is non-monochromatic, so the wavelength filter for the ray is [1, 1, 1] (assuming RGB) and the WavelengthFilter::isMonochromatic() function returns false. For a red monochromatic ray, the filter is [1, 0, 0] and isMonochromatic() returns true. In addition, WavelengthFilter stores actual wavelength values (in nanometers) corresponding to each filter, so the BTDF can use these to compute the index of refraction. The actual filters are hidden from other classes, which will call WavelengthFilter::setIndex() to set a filter with either a random index or a loop over all indices.

To support passing of this WavelengthFilter from the integrator through to the BSDFs, I had to alter several methods in reflection.[hc], essentially just adding this argument and ensuring that normal BSDFs would still function normally. I implemented a DispersiveTransmission BTDF which would be the only BSDF to actually use this WavelengthFilter. DispersiveTransmission takes a base refractive index (n_D as explained above) and a change in refractive index at the extremes (DIFF as explained above). Rather than representing the refractive index curve exactly, I approximate it through linear interpolation. The curve is relatively flat, so this approximation doesn't have a great effect visually. The BTDF is specular, so DispersiveTransmission::Sample_f() is the important function. In Sample_f, there are two possibilities. If the incident light is not monochromatic (from the WavelengthFilter), we randomly choose one of the monochromatic wavelength filters (adjusting the PDF appropriately), otherwise we use the current monochrome. Either way, we get the WavelengthFilter's corresponding wavelength value to calculate the index of refraction, multiply the BTDF's transmission Spectrum by the WavelengthFilter, then proceed as normal. The WavelengthFilter is passed by reference so any changes are maintained. I implemented a Diamond material which is virtually identical to Glass with the exception of using the DispersiveTranmission BTDF.

Now for the photon map integrator, which I implement as DispersionIntegrator. It is essentially identical to the regular PhotonIntegrator, with a couple notable exceptions. When shooting photons, we keep a WavelengthFilter that is initialized to non-monochromatic and passed into BSDF::Sample_f(). If the ray happens to get to a DispersiveTransmission BTDF, it will randomly be assigned a monochromatic WavelengthFilter, as explained above, which will be carried through the rest of the photon's path. This method of randomly choosing a photon's wavelength works well with the rest of the photon shooting code (as opposed to the alternative of spawning photons for each wavelength sample). During actual imaging (the DispersionIntegrator::Li() function), we similarly maintain a WavelengthFilter for the current ray. If the non-monochromatic ray hits a specular, transmissive surface, we loop through all wavelength samples, setting each one to the WavelengthFilter, then shooting the ray through the scene. So a non-monochromatic ray is essentially split into a tree of monochromatic rays. If a monochromatic ray hits a specular, transmissive surface, no looping is necessary and we simply continue as normal. One important note is that the looping only occurs if the BTDF is dispersive, checked with the BSDF::hasDispersiveComponent() function (I didn't want to hack the BxDFType functionality). There would be an imaging error, however, if the BSDF contains a dispersive and a non-dispersive BTDF, as there would be a chance we loop for the non-dispersive component. This could be fixed with a check to see which one we sampled, but I never got around to it and never would have encountered the error.

So, to summarize the integrator: when shooting a non-monochromatic photon into a dispersive surface, the DispersiveTransmission BTDF will randomly choose a wavelength for it; when shooting a regular imaging ray into a dispersive surface, the integrator itself will loop over all wavelength samples.

I also implemented some optimizations in order to produce better-looking, more efficient caustics with such a complex diamond scene. A light can be specified as caustic or non-caustic (the default). The DispersiveIntegrator has a "causticlights" flag. If false, it behaves normally. If true, the integrator will only use "caustic" lights when shooting photons. I wanted to implement my scene with a spotlight over the diamonds, and some global illumination. Tracing caustics from the global light would not produce nice-looking results, as it is unlikely that a photon will hit a diamond, and if it does it will tend to result in a single speckle on the wall. So I treat the spotlight as the only "caustic" light, which produced noticably better results, through somewhat of a hack.

Color space notes: I implemented the WavelengthFilter in terms of the default RGB color space. I originally intended to use the composite spectral model discussed in Sun's dispersion paper, but didn't have time to work out the kinks.

The final image is shown above and here. Despite the 3-wavelength approximation, the results are still acceptable, primarily due to the 'white-in-the-middle' dispersion effect common in crystals (discussed above). The exceptions are rare caustic photons on the wall, in which you can sometimes distinguish red, green, and blue, rather than a smooth rainbow. In addition, there are still some caustic speckles from very rare or complex bounces which could not be duplicated, even with 5 million photons. For comparison, here is the same scene with non-colored diamonds, with and without my dispersion implementation. The rainbow effect is strong in the big diamond, and there are many colored speckles in the pear-shaped diamond in the back. (Note that the images have slightly different indices of refraction, which I did accidentally).

Glass material (click for larger) |

Diamond material (click for larger) |

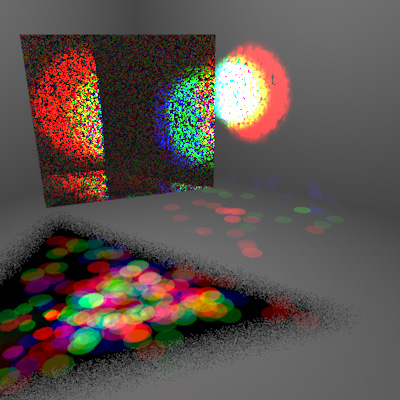

Here are some images I produced along the way. The first is a prism with an insanely high dispersion factor so I could verify the dispersion functionality. The second is my first diamond image, before I realized the diamond model had a right-handed coordinate system. The result: the indices of refraction are reversed at the surface and the rays explode into the scene, producing amazing patterns (despite the low quality render).

Prism with really high dispersion |

Inside-out diamond |

Sun, Drew, and Fracchia (2000). "Rendering Light Dispersion with a Composite Spectral Model," Proceedings of the International Conference on Color in Graphics and Image Processing.

Sun, Fracchia, and Drew (2000). "Rendering Diamonds," Proceedings of the 11th Western Computer Graphics Symposium.

Guy and Soler (2004). "Graphics Gems Revisited, Fast and Physically-Based Rendering of Gemstones," Proceedings of the 2004 Siggraph Conference.

I got my models from 3D Lapidary, opened the DXFs in Maya and exported them to OBJ. I used a tool I wrote (included in the source) to convert from OBJ to PBRT.