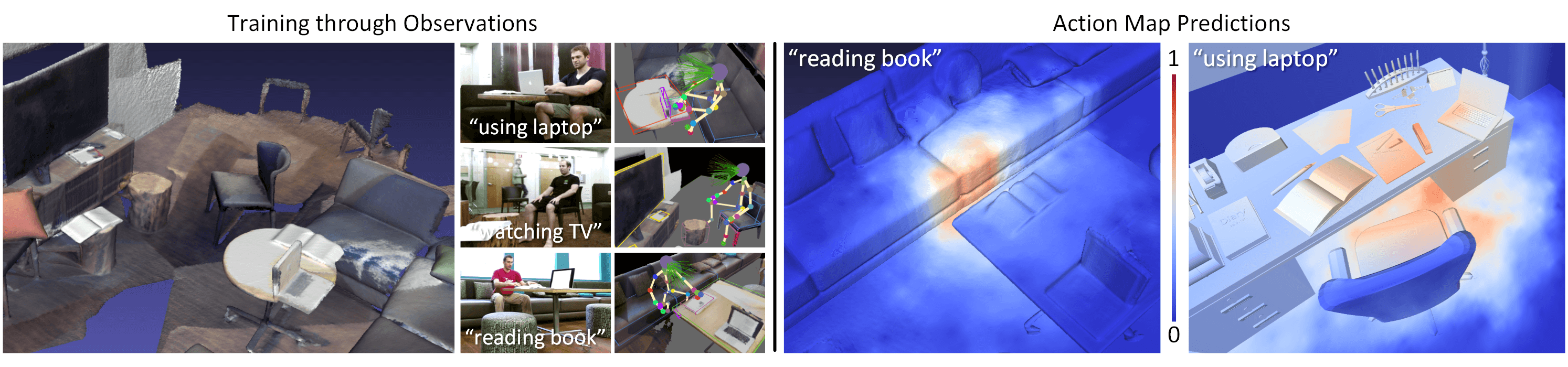

SceneGrok: Inferring Action Maps in 3D Environments

Stanford University

SIGGRAPH Asia 2014 Technical Papers Program

SIGGRAPH Asia 2014 Technical Papers Program

Abstract

With modern computer graphics, we can generate enormous amounts of 3D scene data. It is now possible to capture high-quality 3D representations of large real-world environments. Large shape and scene databases, such as the Trimble 3D Warehouse, are publicly accessible and constantly growing. Unfortunately, while a great amount of 3D content exists, most of it is detached from the semantics and functionality of the objects it represents. In this paper, we present a novel method to establish a correlation between the geometry and the functionality of 3D environments. Using RGB-D sensors, we capture dense 3D reconstructions of real-world scenes, and observe and track people as they interact with the environment. With these observations, we train a classifier which can transfer interaction knowledge to unobserved 3D scenes. We predict a likelihood of a given action taking place over all locations in a 3D environment and refer to this representation as an action map over the scene. We demonstrate prediction of action maps in both 3D scans and virtual scenes. We evaluate our predictions against ground truth annotations by people, and we present an approach for characterizing 3D scenes by functional similarity using action maps.