|

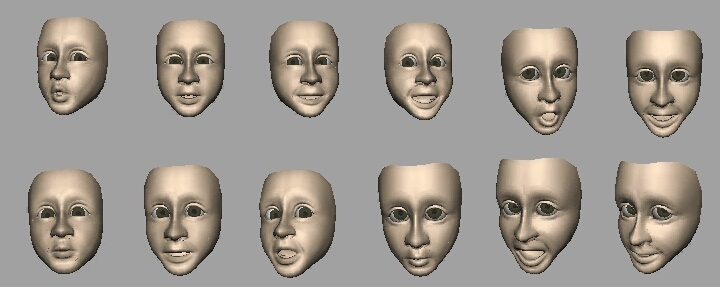

Head-E-Motion |

|

When people engage in a conversation or story telling, we employ a rich set of nonverbal cues, such as head motion, eye gaze, and gesture, to emphasize their emotion. These nonverbal cues are very important for animating a realistic character. Modeling head motions is a very challenging task, since there are no obvious correlations between the speech and expression content and the head motions. For the same sentence and expression, many different head motions are possible. We propose a new data-driven technique that is able to generate realistic and idiosyncratic head motions. |

|

|

| Video (AVI DivX compression) |

| Paper (Stanford CS Tech Report CSTR 2003-02) (Word doc) |