Course Description

Intermediate level, emphasizing physically based simulation techniques for computer animation and synchronized sound synthesis. Topics vary from year to year, but include the simulation of acoustic waves, and integrated approaches to visual and auditory simulation of rigid bodies, deformable solids, collision detection and contact resolution, fracture, fluids and gases, and virtual characters. Students will read and discuss papers, and complete open-ended projects with show-and-tell presentations.

Logistics

- Location

- Gates B3, Tu/Th 4:30–5:50 PM

- Prerequisites

- None required. Recommended: prior exposure to computer graphics and/or scientific computing.

- Textbook

- None; lecture notes and research papers assigned as readings will be posted here.

- Communication

- Slack

- LMS

- Canvas

- Units

- 3 or 4 (cross-listed; coursework is identical)

- Exams

- None

Course Structure

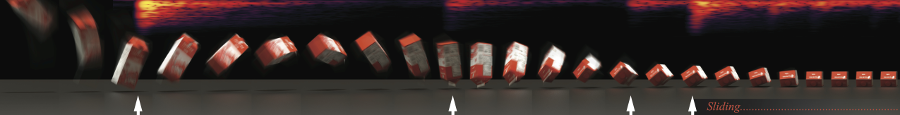

The course is organized around four projects of increasing scope: three focused assignments (HW1–HW3) followed by a self-directed final project. Each assignment period concludes with a show-and-tell session where students present their work. Lectures run throughout the quarter, covering the physics and algorithms behind animation sound synthesis.

Projects

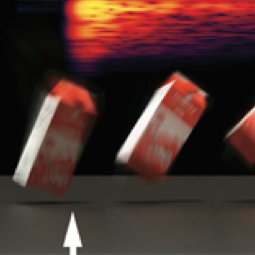

HW3: Making Noise

20%Acceleration noise, combustion, aeroacoustics, fracture, and related methods.

Final Project

40%Self-directed investigation on a student-selected topic related to physically based animation and sound.

Topics

Topics are chosen from the following (and vary by year):

- Acoustic waves and radiation

- Sound propagation and auralization

- Listening and head-related transfer functions (HRTFs)

- Rigid-body dynamics and contact modeling

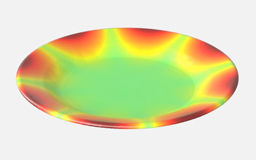

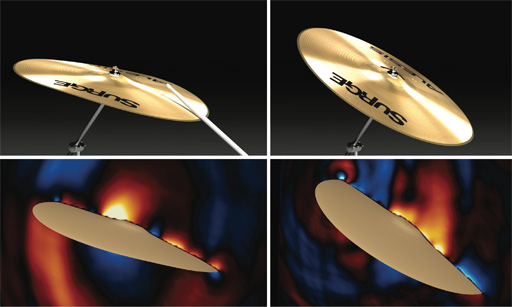

- Modal vibration analysis and synthesis

- Acoustic transfer for modal vibrations

- Acceleration noise for rigid-body impacts

- Fracture simulation and sound

- Reduced-order dynamics and thin shell sound

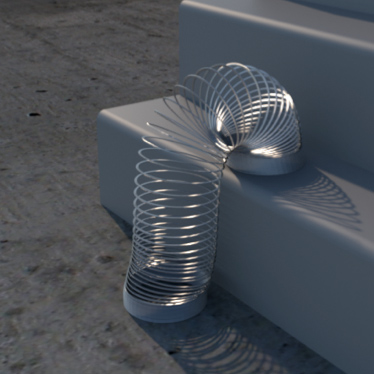

- Elastic rod dynamics and sound

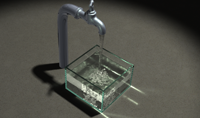

- Liquid and bubble acoustics

- Fire and combustion sound

- Turbulence and aeroacoustic sound

- Cloth simulation and sound

- Time-domain wave-based sound synthesis

- Inverse-Foley animation

- Machine learning for sound generation

- Integrated animation and sound systems

- Selected topics (based on student interest)

Demos

Interactive demos are hosted on GitHub Pages.

- Acoustic Point Sources — Monopole, dipole, and Green's function radiation in 2D

- Plane Wave Scattering by a Cylinder — Plane wave diffraction around a rigid cylinder

- Modal Sound Explorer — Strike strings, bars, membranes, and plates

- Bubble Sound Bank — Shape drips, rain, streams, and waterfalls

- Acoustic Bubble Simulator — Sound of an air bubble oscillating underwater

- Fire Sound: Spectral Bandwidth Extension — Add mid- and high-frequency power-law noise to low-frequency simulated fire sound (Chadwick & James 2011)

- Fire Sound: Texture Synthesis — Combine high-frequency content from real fire recordings with low-frequency simulated audio via multi-resolution texture synthesis

Schedule

Materials will be posted throughout the quarter. Dates and topics may shift.

| Date | Topic | Materials |

|---|---|---|

| Tue Mar 31 | Introduction | Slides (PDF) |

| Thu Apr 2 |

Acoustic Waves and Radiation

|

Slides (PDF)

References

|

| Tue Apr 7 | Acoustic Waves and Radiation (cont.) | |

| Thu Apr 9 | HW1 Show-and-Tell | |

| Thu Apr 9 |

Modal Sound Synthesis

|

Slides (PDF)

References

|

| Tue Apr 14 | Modal Sound Synthesis (cont.) | |

| Thu Apr 16 |

Bubble-based Liquid Sounds

|

Slides (PDF)

References

|

| Tue Apr 21 |

Acceleration Noise

|

Slides (PDF)

References

|

| Thu Apr 23 | HW2 Show-and-Tell | |

| Thu Apr 23 |

Fire Sound (half lecture)

|

Slides (PDF)

References

|

| Tue Apr 28 | Fire Sound (cont.) | |

| Thu Apr 30 |

Fracture Sound (half lecture)

|

References

|

| Thu Apr 30 |

Elastic Rods (half lecture)

|

References

|

| Tue May 5 |

Thin Shells

|

References

|

| Thu May 7 | HW3 Show-and-Tell | |

| Thu May 7 |

Aerodynamic Sound (half lecture)

|

Forthcoming

References

|

| Tue May 12 |

Time-Domain Wave-Based Synthesis

|

Forthcoming

References

|

| Thu May 14 | Final Project Proposals | |

| Thu May 14 |

Inverse-Foley Animation & Control (half lecture)

|

Forthcoming

References

|

| Tue May 19 | Atmospheric and Oceanic Sound Propagation | Forthcoming |

| Thu May 21 | Underwater Sound Synthesis | Forthcoming |

| Tue May 26 | Active Noise Cancellation (ANC) | Forthcoming |

| Thu May 28 | Guest Lecture: Kangrui Xue |

Forthcoming

References

|

| Tue Jun 2 | Final Project Presentations |

Grading

| Component | Weight |

|---|---|

| HW1: Hello Animation Sound | 20% |

| HW2: Practical Modal Sound | 20% |

| HW3: Making Noise | 20% |

| Final Project | 40% |

The course is cross-listed as 3 and 4 units; coursework is identical regardless of unit count. Show-and-tell participation is part of each project grade.

AI, Tools, and Collaboration Policy

You are encouraged to use AI and other tools freely, including for literature searches, research, API questions, code generation (e.g., Claude Code), and writing assistance. You are also welcome to use third-party animation tools, software libraries, and assets.

All third-party resources must be acknowledged. Just as you would cite a software library in a project README, any use of AI-assisted code or writing, third-party assets, or substantive help from collaborators should be credited in your project documentation. Failure to acknowledge these contributions is a form of plagiarism and may result in not receiving credit for the course.

Cite all AI-generated material and/or explain how you have drawn on AI-generated material in your work.

Your evaluation is holistic, based on your effort, creative exploration, participation in discussions, presentations of your work, and overall contributions to the course—not on whether you wrote every line of code by hand.

Be thoughtful about data and privacy. Review and follow the guidelines provided in Stanford IT's resource on Responsible AI at Stanford. When using a third-party, non-Stanford-approved AI tool such as a personal ChatGPT account, make sure to check the fine print terms before signing up. Avoid inputting information that should not be made public. This includes personal or confidential information of your own or that others share with you, as well as proprietary or copyrighted materials.

Be prepared to fact-check and critically evaluate all AI-generated information. Generative AI tools can provide false information (called “hallucinations”), perpetuate biases and/or stereotypes, or draw on copyrighted information without proper attribution, and such problematic information is often presented very convincingly. The materials these tools generate do not necessarily meet the standards of this course.

This policy operates within the broader framework of Stanford policies on generative AI use and academic integrity. If any conflict arises between this description and university-level policy, the university policy takes precedence.