Learning to Navigate the Energy Landscape

Microsoft Research

2Stanford University

3University of Oxford

Abstract

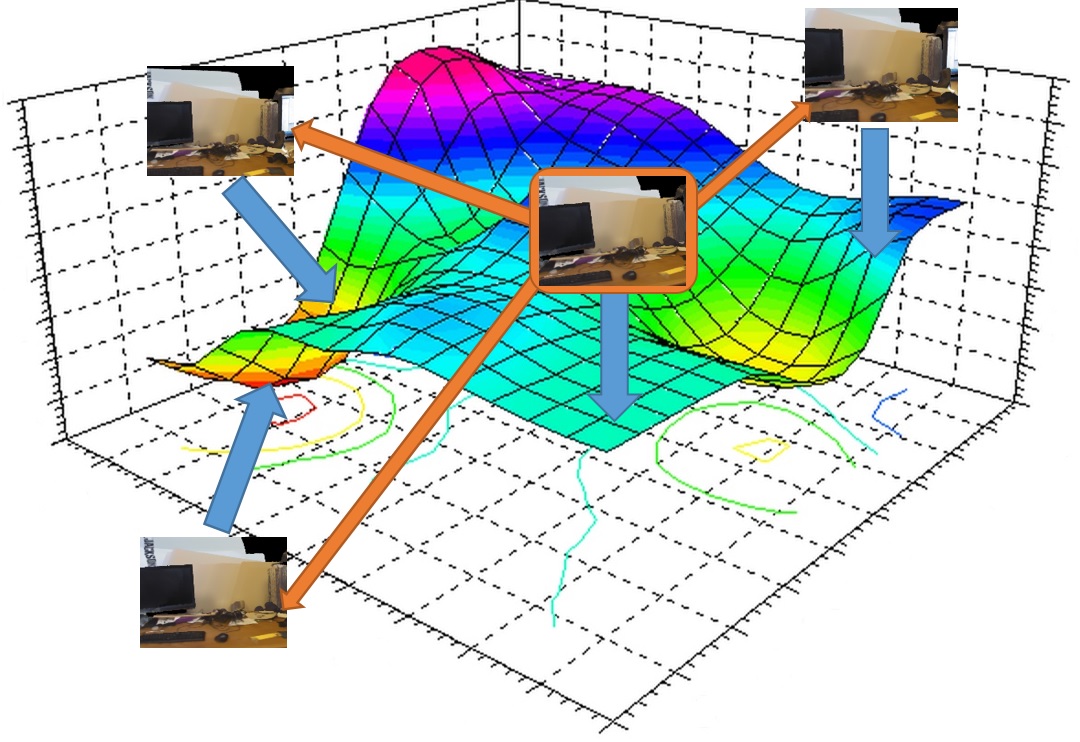

In this paper, we present a novel, general, and efficient architecture for addressing computer vision problems that are approached from an `Analysis by Synthesis' standpoint. Analysis by synthesis involves the minimization of reconstruction error, which is typically a non-convex function of the latent target variables. State-of-the-art methods adopt a hybrid scheme where discriminatively trained predictors like Random Forests or Convolutional Neural Networks are used to initialize local search algorithms. While these hybrid methods have been shown to produce promising results, they often get stuck in local optima. Our method goes beyond the conventional hybrid architecture by not only proposing multiple accurate initial solutions but by also defining a navigational structure over the solution space that can be used for extremely efficient gradient-free local search. We demonstrate the efficacy and generalizability of our approach by on tasks as diverse as Hand Pose Estimation, RGB Camera Relocalization, and Image Retrieval.

Paper | Dataset | BibTeX citation

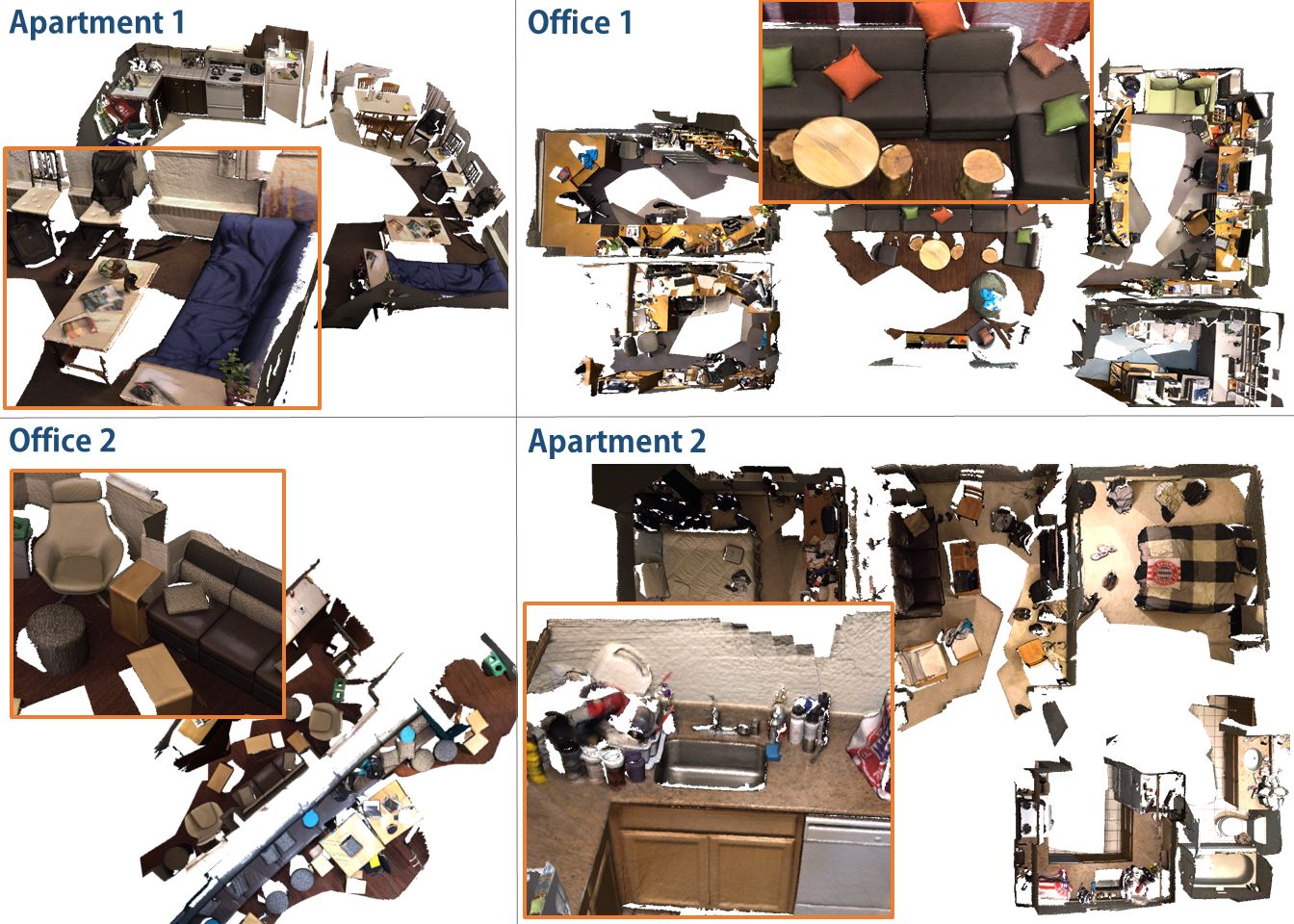

We provide a dataset containing RGB-D data of 4 large scenes, comprising a total of 12 rooms, for the purpose of RGB and RGB-D camera relocalization. The RGB-D data was captured using a Structure.io depth sensor coupled with an iPad color camera. Each room was scanned multiple times, with the multiple sequences run through a global bundle adjustment in order to obtain globally aligned camera poses though all sequences of the same scene. Please refer to the respective publication when using this data.

Format

Each sequence contains:- Color frames (frame-XXXXXX.color.jpg): RGB, 24-bit, JPG

- Depth frames (frame-XXXXXX.depth.png): depth (mm), 16-bit, PNG (invalid depth is set to 0)

- Camera poses (frame-XXXXXX.pose.txt): camera-to-world

- Camera calibration (info.txt): color and depth camera intrinsics and extrinsics. Note that these are the default intrinsics and we did not perform any calibration.