Activity-centric Scene Synthesis for Functional 3D Scene Modeling

ACM Transactions on Graphics 2015 (TOG)

ACM Transactions on Graphics 2015 (TOG)

Abstract

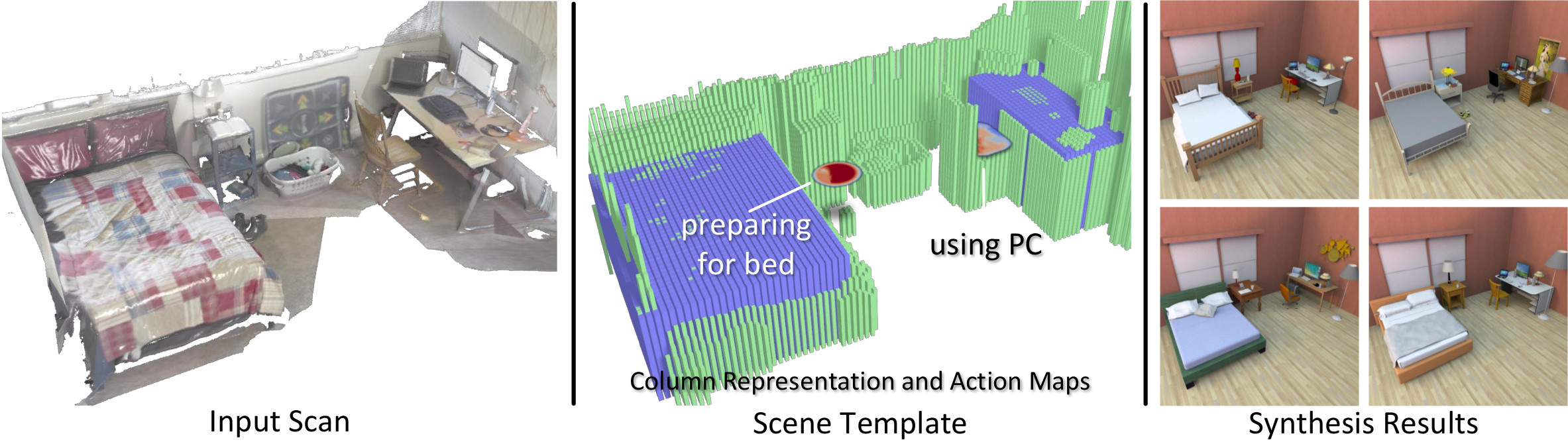

We present a novel method to generate 3D scenes that allow the same activities as real environments captured through noisy and incomplete 3D scans. As robust object detection and instance retrieval from low-quality depth data is challenging, our algorithm aims to model semantically correct rather than geometrically accurate object arrangements. Our core contribution is a new scene synthesis technique which, conditioned on a coarse geometric scene representation, models functionally similar scenes using prior knowledge learned from a scene database. The key insight underlying our scene synthesis approach is that many real-world environments are structured to facilitate specific human activities, such as sleeping or eating. We represent scene functionalities through virtual agents that associate object arrangements with the activities for which they are typically used. When modeling a scene, we first identify the activities supported by a scanned environment. We then determine semantically plausible arrangements of virtual objects retrieved from a shape database constrained by the observed scene geometry. For a given 3D scan, our algorithm produces a variety of synthesized scenes which support the activities of the captured real environments. In a perceptual evaluation study, we demonstrate that our results are judged to be visually appealing and functionally comparable to manually designed scenes.

Extras

Paper: ![]() PDF

PDF

SIGGRAPH presentation slides: ![]() PPTX

PPTX

Video: ![]() MP4

MP4

author = {Fisher, M. and Savva, M. and Li, Y. and Hanrahan, P. and Nie{\ss}ner, M.},

title = {Activity-centric Scene Synthesis for Functional 3D Scene Modeling},

journal = {ACM Transactions on Graphics (TOG)},

volume={34},

number={6},

year = {2015},

publisher = {ACM}

}